Decoding Ethical AI: Guiding Principles for Responsible Implementation

Read Time 2 mins | Jul 24, 2023 9:38:56 AM

Artificial Intelligence (AI) has revolutionized various aspects of our lives, from healthcare to finance, and even transportation. While AI brings tremendous benefits, it also raises ethical concerns. As AI systems become more autonomous and decision-making, it is crucial to ensure that they are developed and deployed responsibly. In this article, we will explore the guiding principles for responsible implementation of AI, addressing the ethical considerations and providing a framework for organizations to navigate the complex landscape of Ethical AI.

Transparency and Explainability

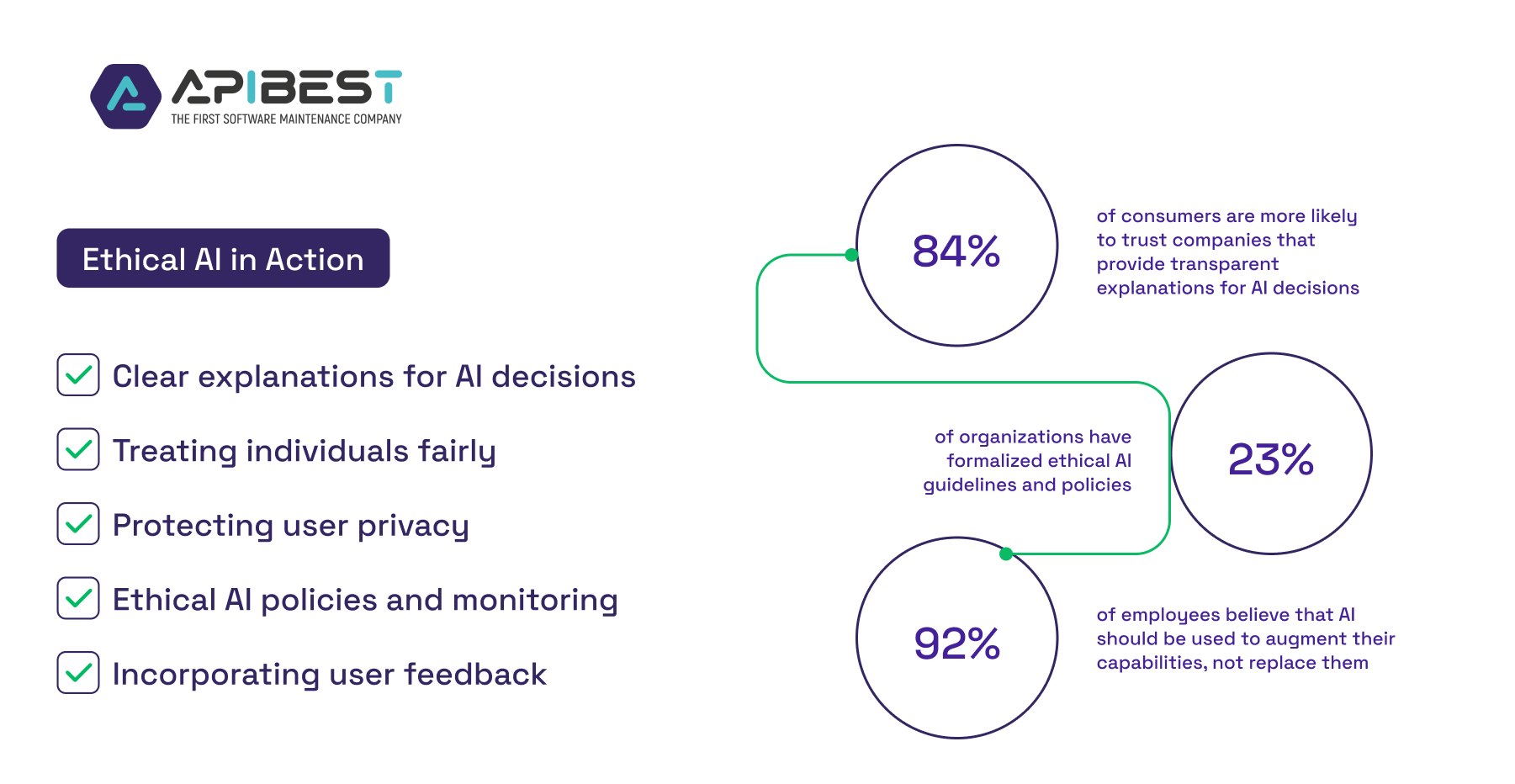

One of the key principles of ethical AI is transparency and explainability. Organizations should strive to build AI systems that can provide clear explanations for their decisions and actions. This helps build trust among users and stakeholders and allows for accountability. Techniques like interpretable machine learning and model explainability can be employed to shed light on the decision-making process of AI systems. 84% of consumers are more likely to trust companies that provide transparent explanations for AI decisions

Fairness and Bias Mitigation

Ensuring fairness and mitigating bias is another crucial aspect of ethical AI. Biased AI algorithms can lead to a loss of $16 billion in revenue globally. AI systems should be designed to treat all individuals fairly and avoid discrimination based on factors like race, gender, or socioeconomic background. It is important to carefully consider the data used to train AI models, as biased or incomplete datasets can lead to biased outcomes. Techniques such as algorithmic auditing and fairness-aware machine learning can help identify and mitigate biases in AI systems.

Privacy and Data Protection

Protecting user privacy and ensuring data security are paramount in ethical AI implementation. Organizations should adhere to privacy regulations and implement robust data protection measures. This includes obtaining informed consent for data collection, using secure data storage and transmission methods, and applying anonymization and encryption techniques to protect sensitive information. 81% of consumers are concerned about how companies use their data in AI systems. By prioritizing privacy and data protection, organizations can build trust and maintain the confidentiality of user data.

Accountability and Governance

To ensure responsible AI implementation, organizations should establish clear accountability and governance mechanisms. This includes defining roles and responsibilities, establishing ethical AI policies, and implementing mechanisms for monitoring and auditing AI systems. By setting up proper governance structures, organizations can ensure that ethical considerations are integrated into the development, deployment, and ongoing use of AI systems. Only 23% of organizations have formalized ethical AI guidelines and policies. (Source: Gartner)

Human-Centred Design

A human-centred approach is essential in ethical AI implementation. 92% of employees believe that AI should be used to augment their capabilities, not replace them. AI systems should be designed to augment human capabilities, enhance decision-making processes, and improve the overall human experience. User feedback and involvement should be incorporated throughout the AI development lifecycle to ensure that AI systems align with human values and needs.

Ethical AI implementation is crucial to harness the benefits of AI while upholding values of fairness, transparency, privacy, and accountability. By following these guiding principles, organizations can navigate the complex landscape of Ethical AI and ensure that AI systems are developed and deployed responsibly. Embracing ethical considerations not only promotes trust and acceptance of AI technologies but also safeguards against potential risks and unintended consequences. It is our collective responsibility to shape the future of AI in a way that benefits society as a whole.